Will a PRS beat PSA for prostate-cancer screening?

Volume 13, Issue 7 | February 19, 2026

ALSO IN THIS ISSUE

FDA recommends testing before use of common chemo drugs

AI vastly improves dx of heart-valve disease

Time to require all mammograms be AI-enhanced?

FDA recommends testing before use of common chemo drugs

5-fluorouracil (5-FU) is a cornerstone of cancer chemotherapy. But about a third of people have a variation in the gene that codes for the enzyme that breaks down the drug. If one of these folks gets treated with it, it builds up in their bodies to levels that can be high enough to kill them.

Back in March 2024, we reported that the FDA had added warnings to 5-FU’s label. But the agency didn’t go so far as to recommend testing for variations in the gene in question, called DPYD. A KFF Health News article reported at the time that the agency “could not endorse the 5-FU toxicity tests because it’s never reviewed them.”

COMMENTARY: Looks like those reviews have happened. We were pleased to hear that as of early February 2026, the FDA now recommends testing for DPYD variants before treating patients with either 5-FU or another chemotherapy drug, capecitabine. This is the essence of using biomarkers to truly personalize medicine.

Will a PRS beat PSA for prostate-cancer screening?

Can a polygenic risk score (PRS) do a better job of screening men for prostate cancer than the prostate-specific antigen test (PSA)? According to a recent article in Nature Cancer, it looks like the answer may one day be yes.

The PRS in question was developed by studying nearly 600,000 men enrolled in the Million Veteran Program. It looks at the 601 gene mutations and tempers that with family history. The Broad Institute and the US Department of Veterans Affairs are now testing the model with a clinical trial.

Reports from validation studies of this PRS are encouraging. Those placed in the lowest-risk 20% of men had only one third the average risk of developing “clinically significant” prostate cancer, while those in the highest-risk 20% were nearly ten times more likely than average to do so. That’s a lot better than elevated PSA (>3ng/ml) alone, which in the group evaluated had only a 13% positive predictive value (i.e., 87% of positive results were false).

COMMENTARY: Of course it is not either/or: this PRS is a “one and done” genetic assessment that identifies who is at higher or lower inherited prostate cancer risk, while PSA is a direct sign of prostate physiology. If the upcoming clinical trial supports this encouraging study, it would significantly help identify those for whom a rising PSA level is most concerning warranting more intensive follow up. Plus, on the low risk side, narrow down the list of folks who would benefit from regular PSA screening. The words “clinically significant” are key here, because the majority of men who live long enough (i.e., into their 80s or 90s) will die with, but not of prostate cancer.

AI stethoscope vastly improves dx of heart-valve disease

The first step of diagnosing heart-valve disease involves three very low-tech devices: a stethoscope and a pair of ears. That leaves the diagnosis at the mercy of the quality of the ears (which almost inevitably declines over time) and the attention and experience of the brain between the ears.

A recent paper found that using a machine-learning (ML)-enabled stethoscope roughly doubled clinicians’ sensitivity at detecting moderate to severe heart-valve problems, compared with using a traditional stethoscope (92% vs. 46%).

COMMENTARY: Listening for heart murmurs (the telltale sign of valvular disease) has always been a highly user-dependent diagnostic, so we’re glad to see AI tech step in and solve this longstanding problem. While specificity did drop with the use of the ML-enabled tool (from 96% to 87%), given that valvular disease affects more than half of people over age 65, we think the benefit will outweigh any downsides here.

Time to require all mammograms be AI-enhanced?

Literally hundreds of studies support the full integration of AI into mammography screening for breast cancer. It’s reached the point that Eric Topol concluded in his February 8 Ground Truths blog that “… all mammograms should incorporate AI,” and summarized why. Although each supporting study uses different populations and interpretation algorithms, seven years of research makes it clear that when it comes to mammography, some combination of AI plus human radiologists beats radiologists alone.

The Swedish Mammography Screening with Artificial Intelligence (MASAI) study further solidifies the evidence. It’s a 100,000-woman, two-year trial to evaluate screening with and without AI assistance. Since there can be no true gold standard to compare to AI, the study focused on “interval cancer cases,” i.e., those that became symptomatic and are diagnosed in between routine screenings. Of course, not all of them might have been detectable at screening, since many of these are faster-progressing cancers that may have been too small to find when screening took place. But the bottom line was clear: AI support proved better across the board, with 11% fewer interval cancers. Mammography sensitivity went up 6.5 points (from 73.5% to 80.0%), and positive predictive value went up 5 points (from 25.5% to 30.5%).

These improvements might have been even larger had this study taken place in the US. Why? In Sweden, each mammogram is read by two different radiologists, while the US standard is a single reading - which might be expected to miss more cases.

COMMENTARY: If this is “case closed” on AI analysis of mammograms (and really, it is), then the fact that further large population studies will deny AI support to control groups of tens of thousands of women and delay implementation for millions in the community just to marginally improve already strong evidence of AI superiority amounts to malpractice. Of course, even with large studies like MASAI, the case counts that drive these conclusions are very, very small, truly needles in haystacks. The difference between interval cancers was just 0.2 cases per 1,000 women, or just 10 fewer interval cancers in the total 50,000 population supported by AI. To be statistically picky, this is evidence of non-inferiority, not the higher bar of superiority.

What about the human element? Will these documented AI-augmented benefits be achieved in routine care? In fact, AI’s impact will be even higher in the real world. The reason has to do with something called the Hawthorne effect: When you know you’re being studied, you perform better. That’s relevant because this trial was not truly “blinded”; Participants knew whether they were being supported by AI or not. So it would be only natural that those without support would be even more attentive than normal.

One fly in the ointment is the risk that as the AI gets better, humans get worse. (This is an important AI issue across the board, not just in the world of mammograms.) If that happens, we’re looking at a transition from AI-supported mammography to AI-only mammography. Are we really ready for that?

Measles

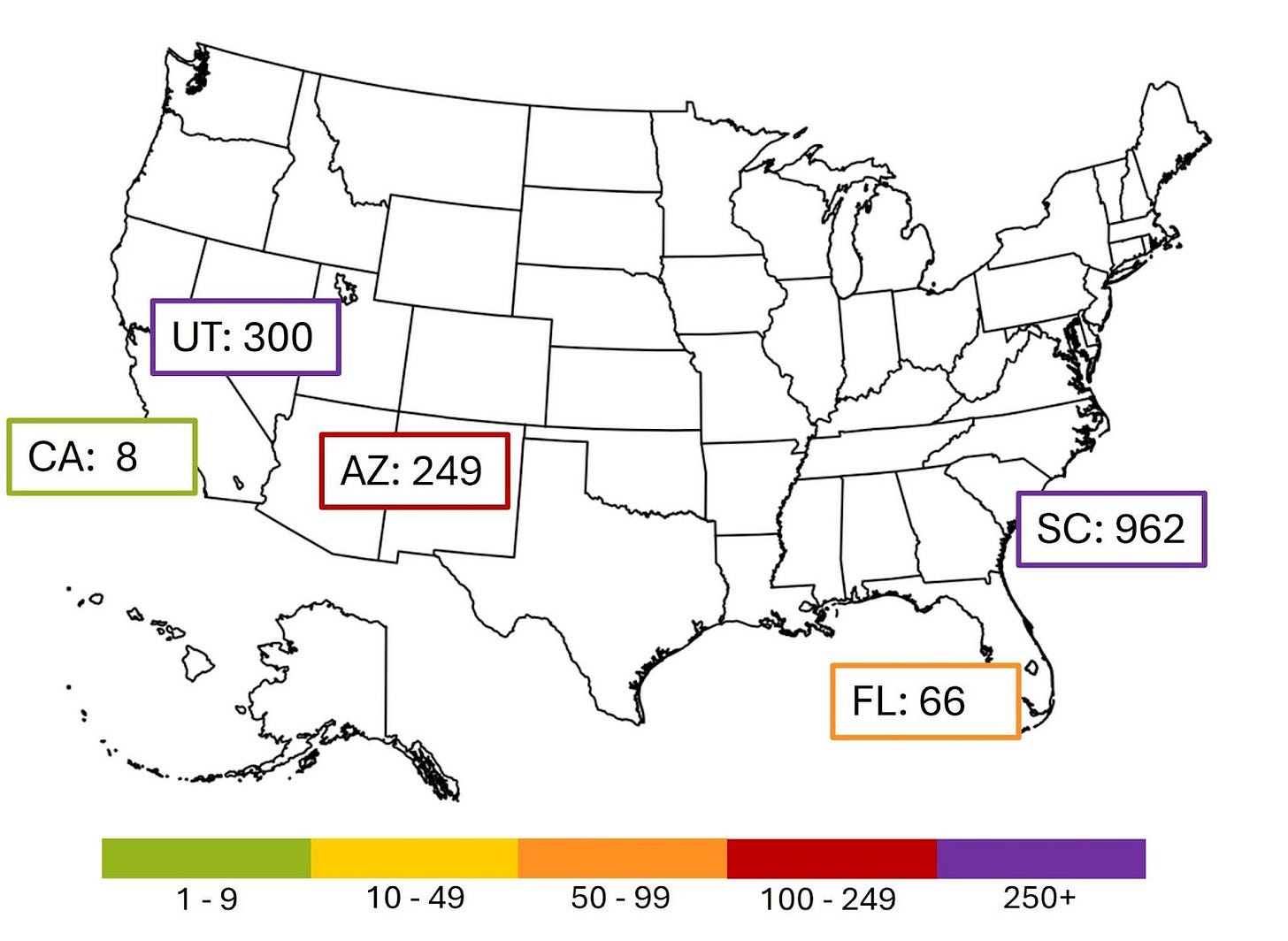

The current larger outbreaks in the US are shown below: South Carolina, Utah, Arizona, Florida and California.